$ cat post/the-function-returned-/-the-network-split-in-the-night-/-i-saved-the-core-dump.md

the function returned / the network split in the night / I saved the core dump

Title: Kubernetes: A New King in Town, but We’re Not Done Yet

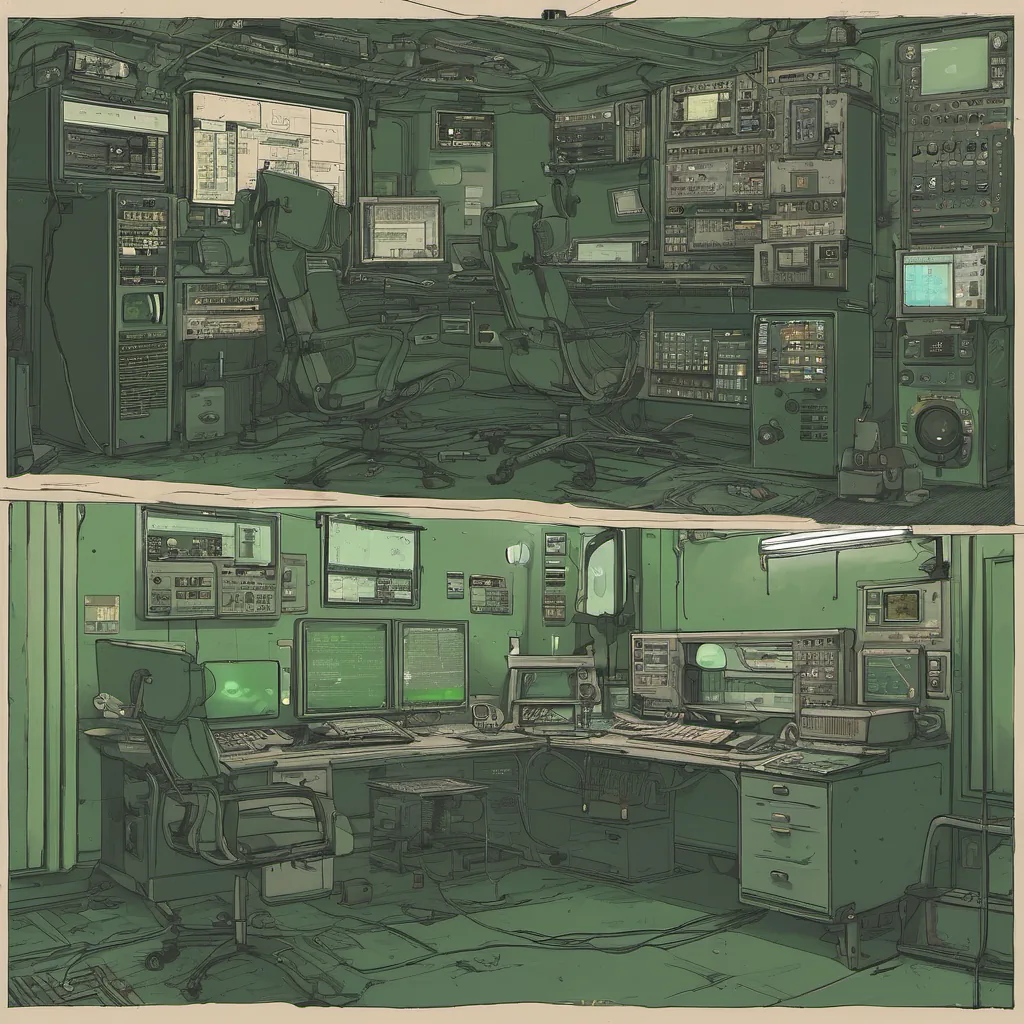

August 1, 2016. The container wars are over, and it seems like Kubernetes has won. I remember the days when Docker was just a fancy thing; we had virtual machines and even Xen for scalability. Now, everyone is talking about pods and namespaces.

Last week, our ops team got together to discuss our new Kubernetes cluster. It’s been in beta for a while now, but this month, they decided it was time to move some of our critical services over from the old Mesos setup. I volunteered to lead the charge on one of those services—our customer-facing application.

The transition has been… interesting. Let me tell you about what happened when we tried to migrate a couple of microservices.

First off, we had some mixed feelings about Kubernetes. On one hand, it promised so much: self-healing pods, automatic scaling, and the ability to run anywhere. On the other hand, everyone was raving about Helm, which seemed like magic for managing our cluster. But when we started setting up a release with Helm, we hit a roadblock almost immediately.

The Helm charts were still in beta, and they weren’t as well-documented as we hoped. We found ourselves spending more time configuring the values.yaml files than actually testing the applications. It was frustrating to see so much promise but not have all the pieces fit together seamlessly.

Then there’s Istio—another tool that seemed like it could be a game-changer, especially for service mesh. But integrating it with our current stack felt more like trying to build a jigsaw puzzle without the box. We had to manually configure a lot of stuff and debug things that just shouldn’t have been this complicated.

Our initial setup looked promising—automatic scaling working its magic, but when we hit some CPU limits during a peak load test, Kubernetes got confused about which pods should be scaled up. It was like trying to herd cats, and I couldn’t help but wonder if maybe we were rushing into something that wasn’t quite ready for prime time.

But hey, we didn’t go in blind. We had Prometheus + Grafana monitoring our services, so when things went wrong, we could dive deep into the metrics and see what was happening. It’s amazing how much visibility those tools give you. At least we knew exactly where to look when things started going south.

One of the biggest issues we faced wasn’t technical; it was cultural. Our team was used to managing infrastructure in a more manual, old-fashioned way. Kubernetes introduced a whole new level of automation that required us to rethink how we approached DevOps and platform engineering. We had to learn not just about the tools but also about best practices for using them.

There’s no doubt that Kubernetes is here to stay, but as with any big change, there are growing pains. I’ve spent long nights wrestling with configuration files and logs trying to get things to work right. But at least now, when someone mentions “Kubernetes,” I can say, “I know what you mean.”

This month also brought some news stories that made me think about the broader tech ecosystem. The Dropbox hack? Sigh. Another reminder of just how much data is out there and how vulnerable it can be. And PowerShell open sourcing on Linux—that’s cool, but will it really change anything for us?

Looking back, August 2016 felt like a turning point in infrastructure. Kubernetes was just one part of the puzzle, but it sure did make things interesting. As we move forward with more and more tools emerging—Terraform (0.x), GitOps, serverless—I wonder what the next big thing will be.

In the meantime, I’ll keep digging into these new technologies, learning from my mistakes, and trying to build a better platform for our team. Here’s hoping that next time we need to migrate something, it won’t feel quite so… challenging.